What Are MCP Apps? The Next Generation of AI Tools

MCP apps are interactive tools that live inside AI assistants like ChatGPT and Claude. Here's what makes them different from chatbots, plugins, and traditional apps.

MCP apps are interactive tools that live inside AI assistants. They run inside ChatGPT, Claude, Cursor, and dozens of other AI clients — not as standalone websites or mobile apps, but as capabilities the AI can discover, invoke, and present to users mid-conversation. If chatbots were the first generation and plugins were the second, MCP apps are the third.

The term "MCP app" is still relatively new, so let me be precise about what it means. An MCP app is a tool built on the Model Context Protocol that exposes functionality — actions, data, or interactive UI — to any AI client that speaks the protocol. The AI decides when to use it. The user never leaves the conversation.

How we got here

The idea of AI tools is not new. But the implementations kept failing in ways that are worth understanding, because MCP apps specifically fix those failure modes.

ChatGPT plugin beta (2023)

OpenAI announced a ChatGPT plugin beta in March 2023. The idea was right — let ChatGPT call external APIs. But it never really worked. Access was extremely limited, the tools were unreliable, and the implementation was locked to ChatGPT with a proprietary spec and no standard for rich responses. You built for one platform that almost nobody could use. OpenAI quietly shut the beta down in favor of Custom GPTs, then MCP.

Custom GPTs

Custom GPTs let anyone wrap instructions and API calls into a shareable package inside ChatGPT. Better UX than the plugin beta, but still platform-locked. No way to use a Custom GPT in Claude or Cursor. And no interactive widgets — responses were text-only.

Browser extensions and chatbots

Companies built browser extensions and embeddable chatbots as a stopgap. These work, but they live outside the AI conversation. Users have to context-switch between the chatbot widget and their actual AI assistant. Fragmented experience.

The common failure

Every approach before MCP had the same fundamental problem: vendor lock-in. You built for one platform, and when users moved to a different AI client, your tool did not follow. You were back to rebuilding.

MCP changed that. Anthropic launched it in November 2024 as an open standard, and within months OpenAI, Google, Microsoft, and dozens more adopted it. Now there is one protocol, one codebase, and every compliant client can use your tool.

MCP apps are tools built on that standard.

What makes something an MCP app

Not every MCP server is an "MCP app" in the way I am using the term here. A bare-bones MCP server that returns a JSON blob is technically compliant but not really an app. When I say MCP app, I mean something that has three qualities:

It is AI-native

The tool is designed to be discovered and invoked by an AI, not by a human clicking buttons. The tool description is written in natural language so the AI knows when to use it. The input schema is structured so the AI can construct valid requests from conversational context. The output is formatted for rendering inside an AI client.

This is a meaningful distinction. A REST API is designed for developers. A website is designed for humans browsing. An MCP app is designed for an AI assistant mediating between the user and the tool.

It uses MCP primitives

The MCP specification defines three primitives that servers can expose:

Tools are actions the AI can execute. "Search for flights," "create a support ticket," "generate a report." Each tool has a name, a natural language description, and a JSON Schema defining its inputs. Tools are the most common primitive — they are the verbs of MCP.

Resources are data the AI can read. A database schema, a product catalog, a company knowledge base. Resources give the AI context to use tools more effectively. An analytics tool works better when the AI understands the data model.

Prompts are pre-written templates that guide the AI's behavior. "When showing product results, use the carousel layout." Prompts are the least used primitive, but they let tool builders influence how the AI presents results.

Most MCP apps use tools extensively, resources occasionally, and prompts sparingly.

It renders inside AI conversations

The output of an MCP app is not a standalone page — it shows up inline in the conversation. In ChatGPT, tools can return interactive widgets — product cards, charts, forms, data tables — through the Apps SDK. In other clients, the rendering varies, but the intent is the same: the user sees results without leaving the chat.

This is the part that makes MCP apps feel different from API integrations. An API integration fetches data and you build the UI yourself. An MCP app handles both — the data and the presentation — inside the AI client's interface.

MCP apps vs. everything else

Worth drawing the lines clearly.

graph LR

subgraph traditional["Traditional Web App"]

direction LR

U1["User"] --> B["Open browser"] --> N["Navigate to URL"] --> L["Log in"] --> S["Search / click"] --> R1["See results"]

end

subgraph mcp["MCP App"]

direction LR

U2["User"] --> A["Ask AI in natural language"] --> R2["See results inline"]

end

style traditional fill:#fef2f2,stroke:#dc2626

style mcp fill:#f0fdf4,stroke:#16a34avs. traditional web apps

A web app lives at a URL. Users navigate to it, log in, click around. An MCP app lives inside an AI conversation. Users ask for something in natural language, and the AI invokes the tool. No navigation, no separate login, no context switching.

Web apps are not going away — they handle complex workflows that conversational interfaces cannot. But for single-purpose tools, lookups, dashboards, and transactional tasks, the conversational interface is often faster.

vs. chatbots

A chatbot is a scripted conversation interface, usually embedded on a website. It follows decision trees or uses basic NLP to route queries. An MCP app is a tool that a frontier AI model decides when and how to use. The reasoning, context awareness, and natural language understanding come from the AI client, not from the tool itself.

The tool does not need to understand language. It just needs to define what it does and what it needs. The AI handles everything else.

vs. browser extensions

Browser extensions modify or augment web pages. They live in the browser chrome, not inside AI conversations. An MCP app is invoked by the AI, not by the user clicking an extension icon. Different trigger, different context, different experience.

vs. Custom GPTs

Custom GPTs are ChatGPT-specific wrappers around instructions and API calls. They work only in ChatGPT. An MCP app works in ChatGPT, Claude, Cursor, VS Code Copilot, Windsurf, and 70+ other clients. Same tool, every platform.

Build once, run everywhere

This is the value proposition that makes the whole thing work.

Before MCP, if you wanted your tool to work in ChatGPT, Claude, and Cursor, you built three different integrations. Each platform had its own API format, its own authentication model, its own way of defining tools. The N x M problem: N AI clients times M tools equals N times M custom integrations.

MCP reduces that to N plus M. Build one MCP server. Every client that speaks MCP can use it.

graph LR Server["Your MCP Server"] --- ChatGPT["ChatGPT"] Server --- Claude["Claude"] Server --- Cursor["Cursor"] Server --- VSCode["VS Code Copilot"] Server --- Windsurf["Windsurf"] Server --- More["70+ other clients"] style Server fill:#e0e7ff,stroke:#4f46e5,stroke-width:2px,color:#1e1b4b

The MCP GitHub repository has over 80,000 stars. There are now over 10,000 published MCP servers. SDKs exist in 10 languages — TypeScript, Python, Java, C#, Go, Rust, Ruby, PHP, Swift, and Kotlin. The protocol moved to the Linux Foundation for governance, which means no single company controls the standard.

This is not a bet on one company's ecosystem. It is an open standard with broad adoption.

Real-world examples

The abstract description only gets you so far. Here is what MCP apps look like in practice.

Product search with interactive cards

An e-commerce MCP app that takes a search query and budget, calls a product API, and returns a carousel of product cards with images, prices, ratings, and "add to cart" buttons. The user asks "find me running shoes under $150" and sees clickable product cards inline in ChatGPT. No website visit required.

Weather dashboards

A weather tool that takes a city name and returns a stat card with current conditions, a line chart with the 7-day forecast, and a table with hourly breakdowns. All rendered as interactive widgets in the conversation.

CRM integrations

A sales tool that connects to Salesforce or HubSpot, lets sales reps check deal status, update contacts, and log activities — all through natural language in their AI assistant. "What is the status of the Acme deal?" returns a structured card with deal stage, value, next steps, and contact info.

Booking flows

A reservation tool with form widgets for dates, party size, and preferences. The user says "book a table for 4 on Friday evening" and sees an interactive form to confirm details, then gets a confirmation card with the booking reference.

For more examples and a deep dive on widget design, see Building MCP Tools with Rich UIs.

The ecosystem right now

The MCP ecosystem has matured faster than most people expected.

The specification is maintained on GitHub and governed by the Linux Foundation. The core team includes engineers from Anthropic, and the protocol has contributions from across the industry.

On the client side, the major players are all in. ChatGPT, Claude, Gemini, Cursor, VS Code Copilot, Windsurf, JetBrains IDEs, and many more support MCP. We maintain a detailed MCP client comparison if you want the feature matrix.

On the server side, there are over 10,000 published MCP servers covering everything from file systems to databases to SaaS integrations. The pace of new servers is accelerating as the tooling matures.

The platform shift

I keep coming back to the same analogy. Every major platform shift follows the same pattern:

Websites (2000s) — A new interface (the browser) became how people accessed information. Businesses needed a web presence. WordPress, Squarespace, and Wix made it possible for anyone to build one.

Mobile apps (2010s) — A new interface (the smartphone) became how people accessed services. Businesses needed mobile apps. The App Store and React Native made mobile development accessible.

AI apps (2020s) — A new interface (the AI assistant) is becoming how people interact with tools. Businesses need to show up inside AI conversations. MCP and visual builders are making that accessible.

| Era | Interface | What businesses needed | Enablers |

|---|---|---|---|

| 2000s | The browser | A web presence | WordPress, Squarespace, Wix |

| 2010s | The smartphone | Mobile apps | App Store, React Native |

| 2020s | The AI assistant | AI-native tools | MCP, visual builders |

Over 1.2 billion people use AI assistants monthly. AI-referred traffic is growing at 357% year over year. The users are already there. The protocol is standardized. The tooling is catching up.

MCP apps are how you show up in that new interface.

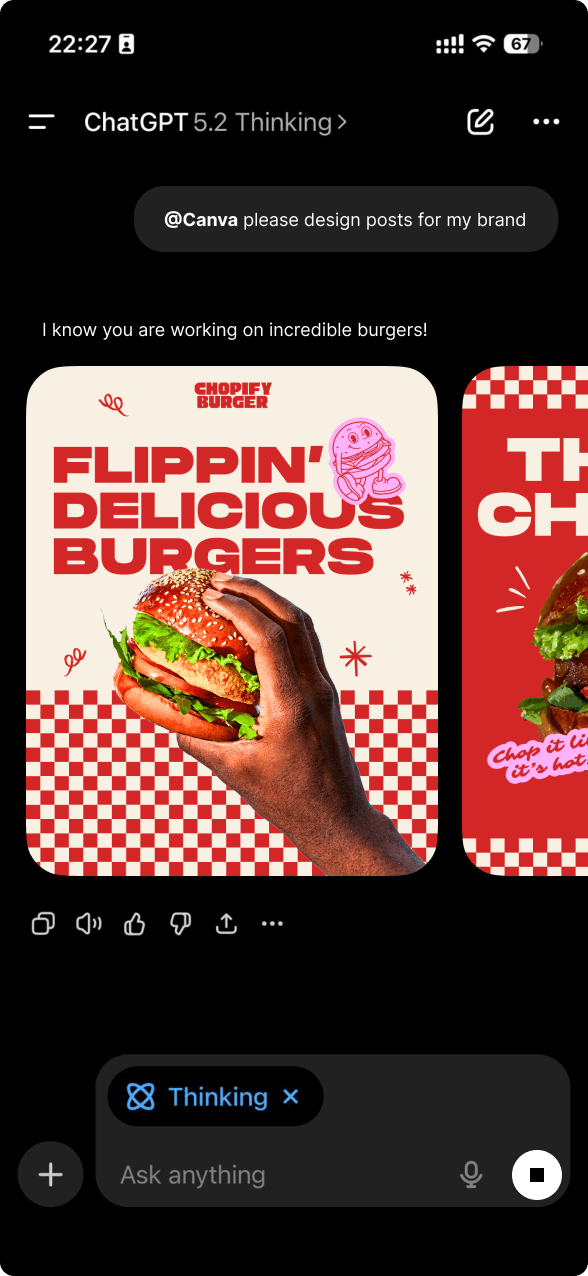

How drio fits

Building an MCP app from scratch means understanding the protocol spec, writing server code in TypeScript or Python, handling authentication, deploying infrastructure, and testing across multiple clients. It is a real engineering project.

drio is a visual builder for MCP apps. You design your tools on a canvas, connect APIs visually, map data to widget primitives, and deploy with one click. The builder compiles your configuration into a spec-compliant MCP server — no code required.

That is the same pattern that Shopify used for e-commerce and WordPress used for websites. Take the technical complexity, put it behind a visual interface, and let more people build.

Key takeaways

- MCP apps are interactive tools built on the Model Context Protocol that live inside AI assistants.

- They differ from chatbots, plugins, and web apps because they are AI-native, protocol-standard, and render inside conversations.

- The three MCP primitives — tools, resources, prompts — define what an MCP app can expose.

- Build once, run everywhere: one MCP server works across 70+ AI clients.

- The ecosystem has over 10,000 servers, SDKs in 10 languages, and is governed by the Linux Foundation.

- MCP apps are the third wave of platform tools — after websites and mobile apps.