What Are ChatGPT Apps? A Complete Guide for 2026

What ChatGPT apps are, how they relate to GPTs and MCP, and what "connected apps" actually means in ChatGPT today.

ChatGPT apps are external tools and data sources you can connect inside ChatGPT. Some render rich UI in the chat. Some search across your data. Some can take actions on your behalf. In OpenAI's current terminology, the old word "connectors" has been folded into the broader word apps, which is why a lot of older screenshots and docs feel slightly out of sync.

Here is the clean mental model that makes the whole category much less confusing. The app is the user-facing experience in ChatGPT. The MCP server is the technical system behind the scenes. A connected app is just an app you or your workspace have already authorized. Keep those three separate and the rest starts to click.

The shortest useful definition

If you only need the practical answer, this is it:

- A ChatGPT app is a capability that brings an outside service into a ChatGPT conversation.

- A connected app is that same app after you click Connect and finish the auth flow.

- An MCP server is the tool and data layer ChatGPT can call behind the app.

OpenAI's current help docs describe apps as the way to bring "tools and data" into ChatGPT so you can search, reference, and work without leaving the conversation. The same help page also makes clear that apps can show up in a few different shapes:

- Interactive apps with rich in-chat UI

- Search apps that pull relevant third-party context into the conversation

- Deep research apps that help with multi-source analysis and citations

- Sync apps that index content ahead of time for faster answers

- Write action apps that can create or update things after user confirmation

That matters because "ChatGPT app" is not one narrow product format. It is now the umbrella term for connected capabilities inside ChatGPT.

Why the name got confusing

A lot of the confusion is just timeline.

OpenAI's third-party tool story changed a few times, and each version came with new naming:

| Era | What it was | What happened |

|---|---|---|

| Plugins | Third-party API integrations for ChatGPT | OpenAI deprecated them. Plugin conversations stopped working on April 9, 2024. |

| GPTs | Custom versions of ChatGPT with instructions, knowledge, capabilities, and optional external connections | Still current. GPTs are now a distinct ChatGPT construct, not the same thing as apps. |

| Apps | The current umbrella for connected capabilities in ChatGPT, including directory apps and custom apps | OpenAI introduced apps on October 6, 2025, and opened app submissions on December 17, 2025. |

That is why you still see all three terms in the wild.

The plugin era is over. The GPT layer still exists. The app layer is the newer connected-runtime model OpenAI is pushing now.

If you want the deeper comparison, ChatGPT Plugins vs Custom GPTs vs MCP Apps breaks the three generations out in more detail.

What replaced plugins, exactly?

Not one thing. More like two successive moves.

First, OpenAI replaced the plugin beta with GPTs. The Help Center is explicit here: plugin conversations could no longer be continued after April 9, 2024, and OpenAI said GPTs were the replacement path.

Then, later, OpenAI introduced apps in ChatGPT as the new way to bring richer, connected experiences into chat. On top of that, the Help Center now says "connectors are now apps."

So the clean version is:

- Plugins were the old beta system.

- GPTs were the first big replacement and are still important today.

- Apps are the newer connected experience model, especially for directory apps, custom apps, and MCP-backed integrations.

That also explains why old posts that say "plugins were replaced by GPTs" are not wrong, but they are incomplete now.

Where GPTs fit now

GPTs are still very much part of ChatGPT.

OpenAI's current GPT docs define them as custom versions of ChatGPT configured for a specific purpose. A GPT can include:

- instructions

- uploaded knowledge

- built-in capabilities

- apps

- actions

One detail that matters a lot: according to OpenAI's current Help Center, a GPT can use either apps or actions, but not both at the same time.

That gives you a useful distinction:

- A GPT is a customized ChatGPT experience.

- An app is an external capability ChatGPT can connect to.

Sometimes they work together. A GPT can rely on apps. But they are not the same thing.

If you just want a specialized assistant with instructions and maybe some knowledge files, a GPT might be enough. If you want ChatGPT to connect to an outside service, search live data, trigger actions, or render a richer in-chat experience, you are usually thinking about apps.

How apps work in ChatGPT today

The current user flow is simpler than most people expect.

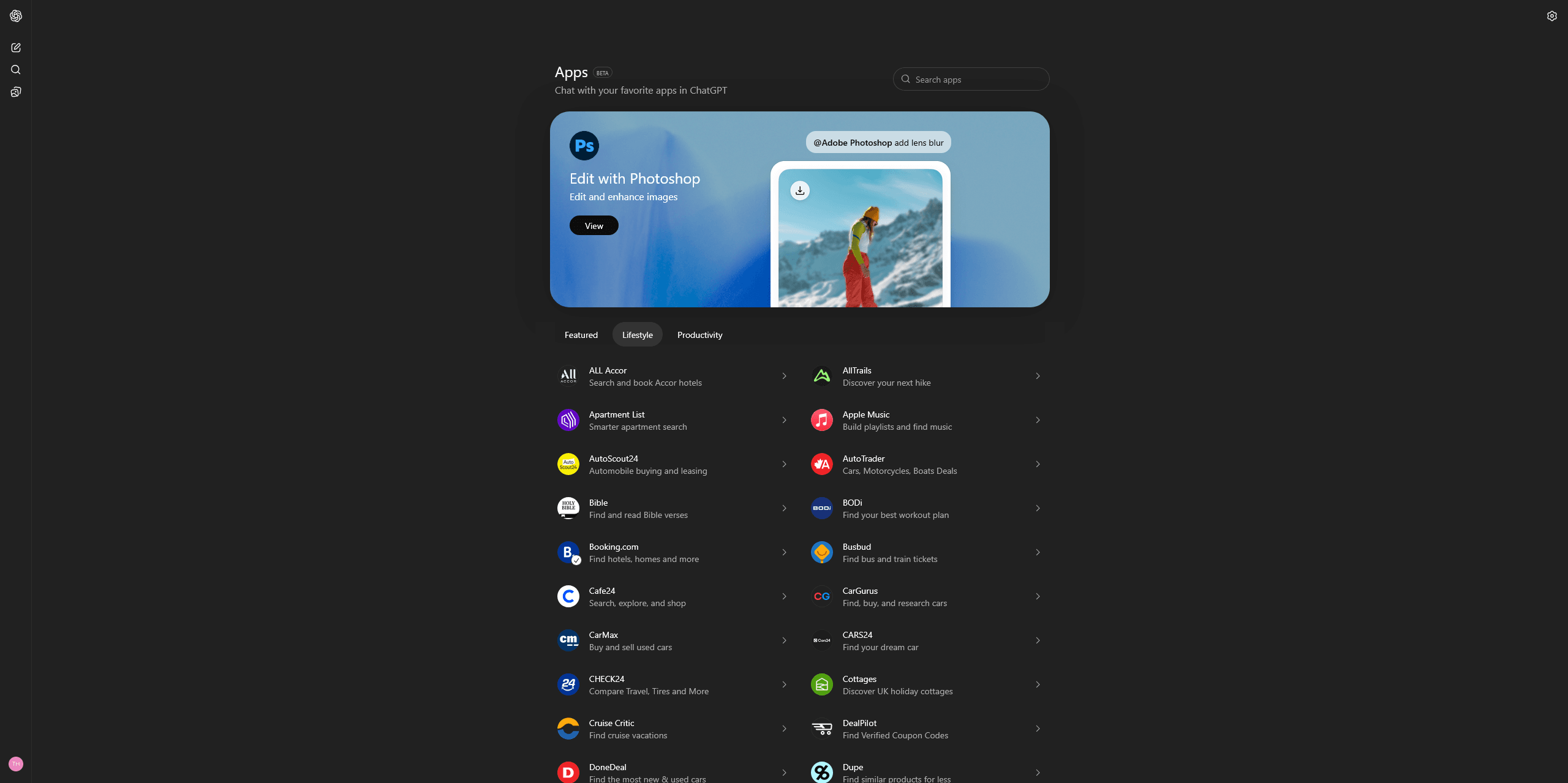

OpenAI's apps help page says users can browse apps from the ChatGPT app directory, from Settings → Apps, or from the Apps entry in the sidebar. Once an app is available for your plan or workspace, you click Connect, complete the auth flow if needed, and then use it inside chat.

After that, apps can show up in a few ways:

- you can call them explicitly with an

@mention - you can pick them from the app picker

- ChatGPT can suggest them when they fit the conversation

That is the user-facing part.

On the builder side, OpenAI's current docs say you can also build your own custom app for your own tools or internal data. The same Help Center page notes that "custom connectors" were renamed to custom apps. So if you are reading older setup guides, that terminology change is one of the main reasons they feel stale.

The part most guides blur together

This is the distinction most people actually need:

| Term | What it means in practice |

|---|---|

| App | The thing the user sees in ChatGPT: directory listing, auth flow, capabilities, and often an in-chat UI |

| MCP server | The endpoint or service ChatGPT calls to discover tools, retrieve data, and perform actions |

| Connected app | An app that has already been enabled for a user or workspace |

That middle layer matters because people often ask "what is an MCP server in ChatGPT?" like it is the same thing as the app itself.

Not quite.

The official launch post says the Apps SDK builds on MCP. The current Help Center says developers can build apps using MCP so ChatGPT can call approved tools and retrieve information from services. That means MCP is the underlying protocol and tool surface, but the app is the broader user-facing package around it.

In other words:

- MCP is the plumbing.

- The app is the experience.

- Connected is just the account state.

That is also why "an app" is usually a better reader-facing term than "an MCP server," even when the server is the core technical artifact behind it.

If you want the protocol-side explanation, What Is MCP? is the better place to start.

How MCP fits into ChatGPT now

This is the short version.

MCP is not the marketing label users click on in ChatGPT. It is the technical standard underneath the modern app stack.

OpenAI's October 6, 2025 launch post said the Apps SDK is built on the Model Context Protocol. The current apps help docs say custom apps can be built using MCP so ChatGPT can call tools and retrieve information from services. And OpenAI's developer guidance around app-building keeps framing the job as defining a small set of well-scoped capabilities that the model can invoke at the right moment.

So when someone asks, "Are ChatGPT apps just MCP servers?", the right answer is roughly:

Modern ChatGPT apps are usually MCP-backed, but the app is larger than the raw server.

It often includes:

- auth and connection state

- app metadata and listing details

- UI behavior inside ChatGPT

- permissions and safety controls

- the MCP-backed tools underneath

That distinction matters because it keeps you from mixing up the protocol with the product surface.

If your question is more practical than conceptual, How to Add MCP Tools to ChatGPT is the step-by-step setup version.

What makes a ChatGPT app feel good instead of bolted on

OpenAI's own developer advice is useful here.

In What makes a great ChatGPT app, OpenAI keeps coming back to one idea: the best apps are not mini copies of your existing website. They are a small set of capabilities the model can reach for inside conversation.

That usually means an app adds one or more of three things:

- new context the model could not access on its own

- new actions the model could not perform on its own

- better presentation than plain text can provide

That framing is useful even if you are not building yet, because it explains why some apps feel natural in ChatGPT and some feel awkward. The good ones do not try to drag you into a whole separate product. They help at the point where the conversation actually needs them.

If you are trying to build one

If your goal is not just to understand the category but to ship something, the next step depends on what you mean by "build a ChatGPT app."

If you want to connect an MCP endpoint and test the setup flow, start with How to Add MCP Tools to ChatGPT.

If you are getting ready to publish to the directory, read 10 Things OpenAI Doesn't Tell You About Submitting a ChatGPT App before you touch the form. It will save you time.

If you want the no-code path, Build AI Apps Without Code is the better entry point.

That is also the lane drio fits into. The goal is not to be the definition of "ChatGPT apps" as a category. The goal is to make the build path easier once you already know the category is relevant.

FAQ

Are ChatGPT apps the same thing as GPTs?

No. GPTs are customized versions of ChatGPT. Apps are connected external capabilities ChatGPT can use. A GPT can use apps, but they are still different layers.

What is MCP in ChatGPT?

MCP is the underlying protocol OpenAI uses for the modern app stack. It is the tool and data layer that lets ChatGPT connect to external services in a standard way.

What is an MCP server in ChatGPT?

It is the backend service ChatGPT talks to for tools and data. The app is the user-facing experience around that capability.

What does "connected app" mean?

Just that the app has already been authorized on your account or workspace. It is not a separate product type.

Did apps fully replace GPTs?

No. GPTs still exist. Apps are the newer connected-capability layer inside ChatGPT, while GPTs remain the customizable assistant layer.

Takeaways

- ChatGPT apps are the current umbrella term for connected capabilities inside ChatGPT.

- Plugins are over. OpenAI ended plugin conversations on April 9, 2024.

- GPTs still matter and remain a separate concept from apps.

- Connectors were renamed to apps, which is why older docs often sound off.

- MCP is the protocol layer, not the whole user-facing app by itself.

If you just needed the shortest possible answer: a ChatGPT app is the experience, an MCP server is the machinery behind it, and a connected app is one you have already enabled.