How to Add MCP Tools to ChatGPT

Step-by-step guide to connecting MCP tools to ChatGPT — covers both manual server setup and the visual drio path, with screenshots and troubleshooting.

You can add custom MCP tools to ChatGPT in under 10 minutes. ChatGPT supports remote MCP servers over streamable HTTP — meaning any tool you build and deploy to a URL can be used directly inside ChatGPT conversations. This guide covers both paths: building a server from scratch with the TypeScript SDK and building visually with drio.

If you are not familiar with MCP yet, start with What Is MCP? for the background. This guide assumes you know what MCP tools are and want to get one working in ChatGPT.

What an MCP server is in ChatGPT

Before the setup steps, one useful clarification.

When ChatGPT asks for an MCP URL, it is asking for the URL of a remote MCP server. That server is the thing that exposes your tools, describes their schemas, and returns structured content when ChatGPT calls them.

So the setup flow is really:

- Build or deploy an MCP server

- Give ChatGPT the server URL

- Let ChatGPT discover the tools from that server

If that part feels abstract, What Are ChatGPT Apps explains the ecosystem-level view. This post is the practical version.

Prerequisites

Before you start, you need:

- ChatGPT Plus, Team, or Enterprise — MCP tools are not available on the free plan as of March 2026.

- An MCP server — Either one you build yourself or one deployed through drio. We will cover both.

- A publicly accessible URL — ChatGPT connects to MCP servers over HTTPS. Your server needs to be reachable from the internet.

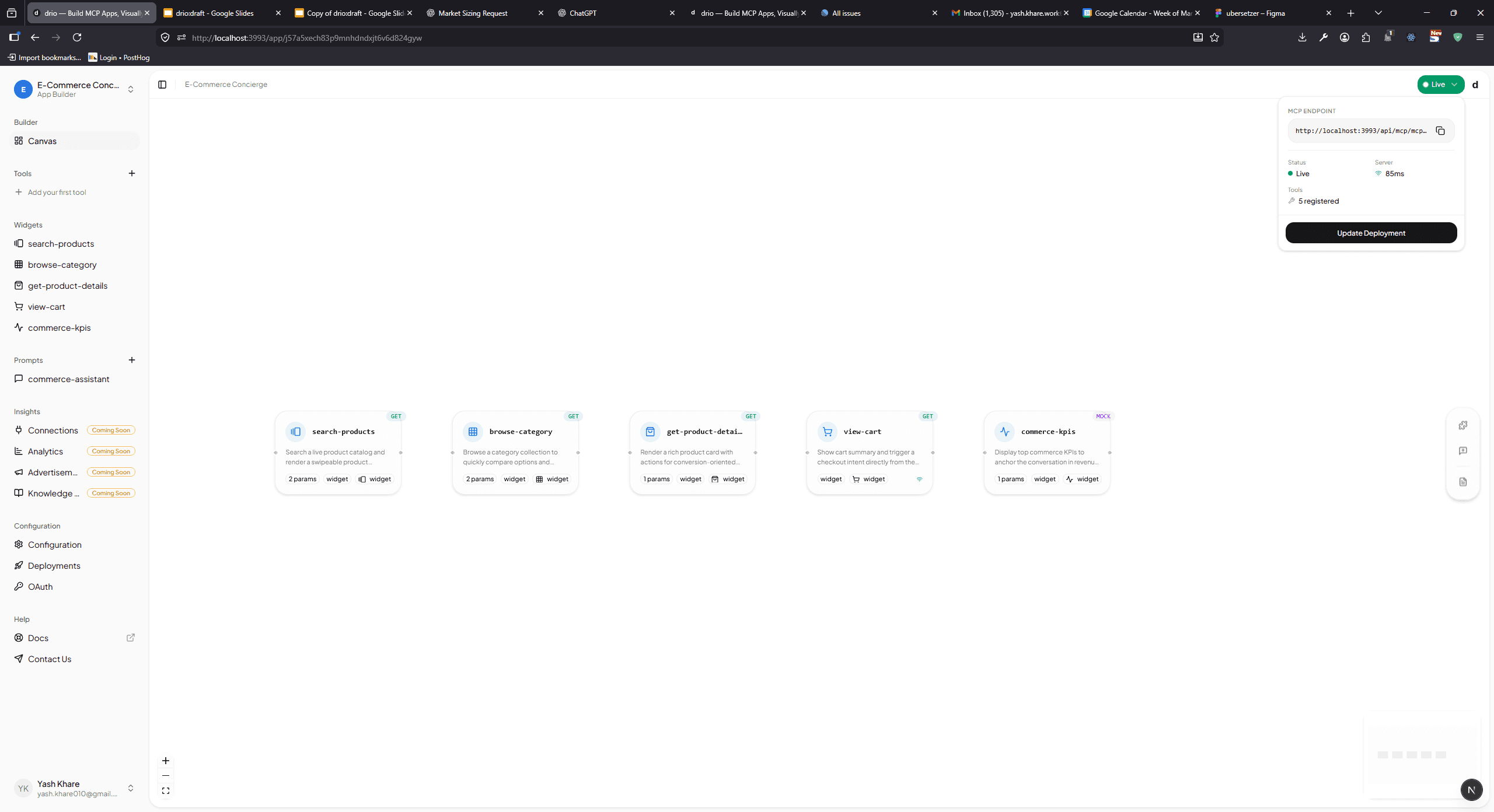

Path 1: Build and deploy with drio (10 minutes)

This is the fastest path. You build the tool visually, deploy with one click, and paste the URL into ChatGPT.

Step 1: Create your tool in drio

Open the drio builder and create a new tool. For this example, we will build a simple weather tool:

- Name:

get_weather - Description: "Get current weather and 7-day forecast for any city"

- Parameters:

city(text, required)

Connect an API Request node to the Open-Meteo API and map the response to a Card widget (current conditions) and a Chart widget (forecast). If you need help with this step, our Connecting Your First API guide walks through it in detail.

Step 2: Deploy

Click Deploy in the drio builder. Your tool compiles into a spec-compliant MCP server and deploys to the cloud. You get a URL:

https://app.getdrio.com/{your-app}/mcpCopy this URL. That is all you need.

Step 3: Add to ChatGPT

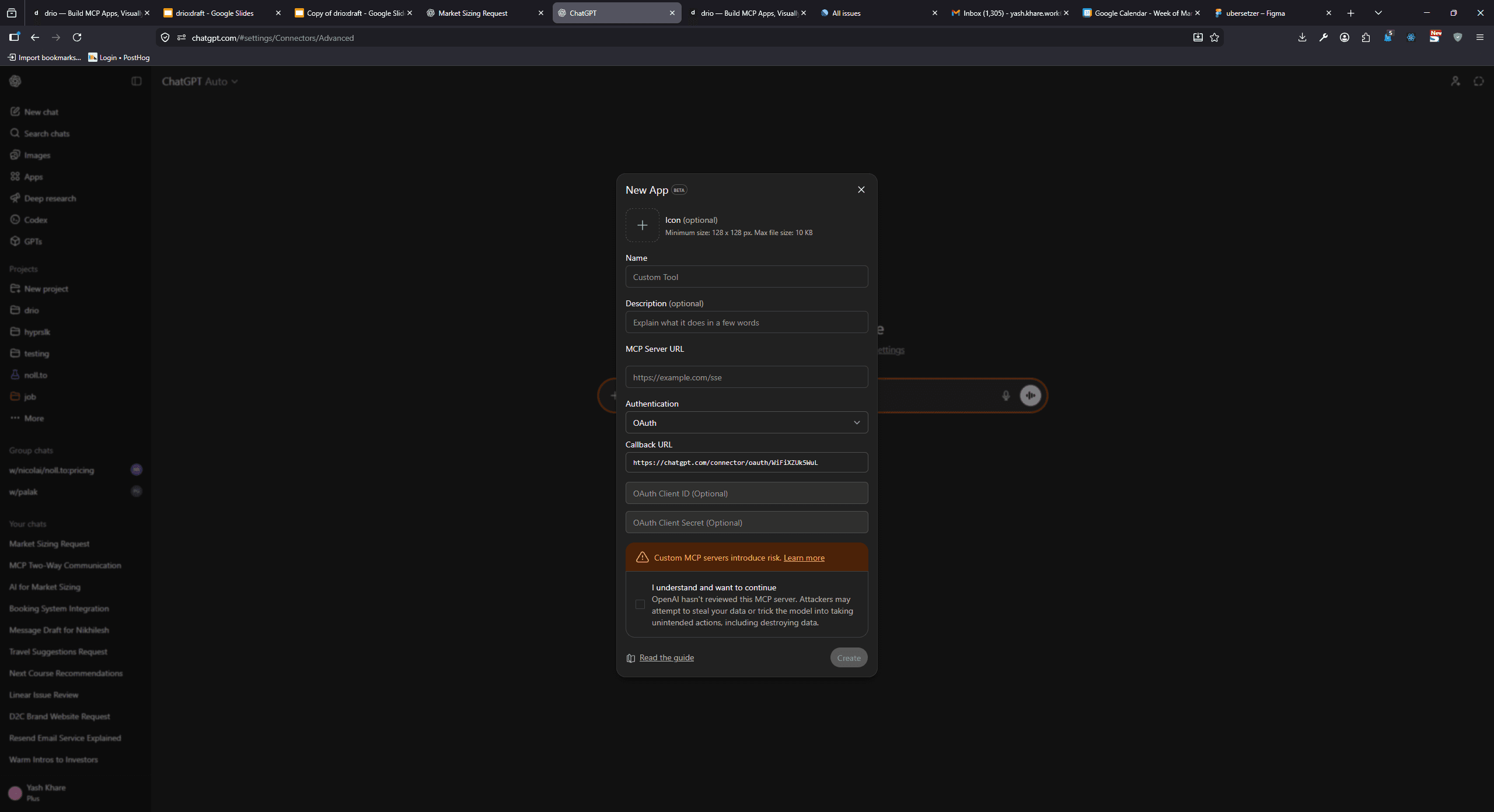

- Open ChatGPT

- Start a new conversation

- Click the tools icon in the input bar (or go to Settings > Connected Apps)

- Click Add MCP Tool (or Add custom integration)

- Paste your drio MCP URL

- Click Connect

ChatGPT will reach out to your server, perform the MCP initialization handshake, and discover your available tools. You should see your tool appear in the tools list within a few seconds.

sequenceDiagram autonumber participant User participant ChatGPT participant MCP as drio MCP endpoint User->>ChatGPT: Add custom MCP URL ChatGPT->>MCP: Initialize connection MCP-->>ChatGPT: Advertise available tools User->>ChatGPT: Ask a question ChatGPT->>MCP: Invoke matching tool MCP-->>ChatGPT: Return structured content ChatGPT-->>User: Render tool output inline

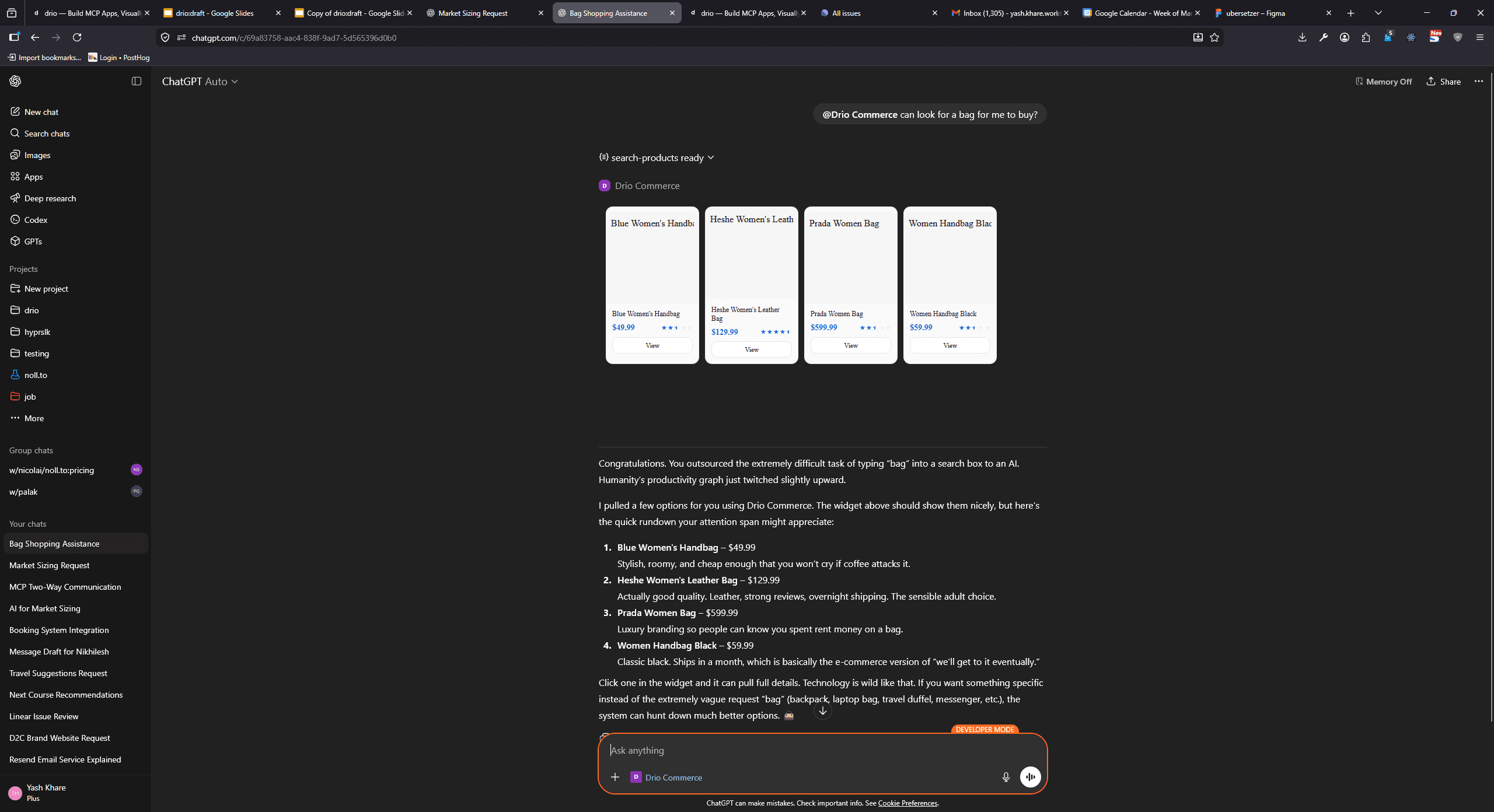

Step 4: Test it

Type a message that should trigger your tool: "What is the weather in Berlin?" ChatGPT will recognize the intent, invoke your MCP tool, and render the response — including your widgets — inline in the conversation.

That is it. Your custom MCP tool is live in ChatGPT.

Path 2: Build from scratch with the TypeScript SDK (30-60 minutes)

If you want full control over the server implementation, you can build an MCP server from scratch using the official TypeScript SDK. The MCP build guide has the complete walkthrough — here is the condensed version.

Step 1: Set up the project

mkdir my-mcp-server && cd my-mcp-server

npm init -y

npm install @modelcontextprotocol/sdkStep 2: Define your tool

Create server.ts:

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

import { StreamableHTTPServerTransport } from "@modelcontextprotocol/sdk/server/streamableHttp.js";

const server = new McpServer({

name: "weather-server",

version: "1.0.0",

});

server.tool(

"get_weather",

"Get current weather for a city",

{ city: { type: "string", description: "City name" } },

async ({ city }) => {

const response = await fetch(

`https://api.open-meteo.com/v1/forecast?latitude=52.52&longitude=13.41¤t=temperature_2m`

);

const data = await response.json();

return {

content: [

{

type: "text",

text: `Weather in ${city}: ${data.current.temperature_2m}°C`,

},

],

};

}

);This is a minimal example — it hardcodes Berlin's coordinates. A production server would geocode the city name, handle errors, and return structured widget content instead of plain text.

Step 3: Add the HTTP transport

ChatGPT requires streamable HTTP transport. Add an Express server (or any HTTP framework):

import express from "express";

const app = express();

app.use(express.json());

app.post("/mcp", async (req, res) => {

const transport = new StreamableHTTPServerTransport("/mcp");

await server.connect(transport);

await transport.handleRequest(req, res);

});

app.listen(3000, () => {

console.log("MCP server running on port 3000");

});Step 4: Deploy

Deploy your server to any cloud provider — Vercel, Railway, Fly.io, AWS, or your own infrastructure. The only requirement is a publicly accessible HTTPS URL.

Once deployed, you have the same URL format to paste into ChatGPT's MCP settings.

Step 5: Add to ChatGPT

Follow the same steps as Path 1, Step 3. The process for adding the URL to ChatGPT is identical regardless of how you built the server.

What ChatGPT supports

As of March 2026, here is what works with MCP in ChatGPT based on the official documentation:

- Tools — Full support for tool discovery, invocation, and response rendering

- Streamable HTTP transport — The only supported transport. stdio (local servers) is not supported in ChatGPT.

- Structured content — ChatGPT renders structured content including text, images, and interactive widgets

- Authentication — OAuth 2.0 and API key authentication for server endpoints

- Multiple tools per server — A single MCP server can expose many tools

What is not yet supported

- Resources — ChatGPT does not yet consume MCP resources for context

- Prompts — MCP prompt templates are not yet used by ChatGPT

- stdio transport — ChatGPT only supports remote servers, not local ones

- Notifications — Progress notifications and server-to-client updates have limited support

These limitations may change as OpenAI continues to expand their MCP support.

Testing before you connect

Before adding your server to ChatGPT, test it locally with the MCP Inspector. Inspector is a developer tool that lets you:

- Connect to any MCP server (local or remote)

- Browse available tools, resources, and prompts

- Invoke tools with custom parameters

- View the raw JSON-RPC messages

This is much faster for debugging than testing directly in ChatGPT. If your tool works in Inspector, it will work in ChatGPT.

npx @modelcontextprotocol/inspector https://your-server.com/mcp

Troubleshooting

Tool does not appear in ChatGPT

- Check the URL — Make sure it is HTTPS, no trailing whitespace, and points to the correct MCP endpoint path.

- Check server logs — ChatGPT sends an initialization request when you connect. If your server is not receiving it, the URL may be wrong or the server may be down.

- Wait a moment — ChatGPT may take a few seconds to discover tools after connection.

- Check your plan — MCP tools require ChatGPT Plus, Team, or Enterprise.

Tool is invoked but returns an error

- Timeout — ChatGPT has a timeout for tool responses. If your API calls are slow, consider adding caching or optimizing the query.

- Auth failure — If your server requires authentication, make sure you configured it in ChatGPT's MCP settings.

- Schema mismatch — The tool's input schema must be valid JSON Schema. ChatGPT validates inputs before sending them.

Widgets do not render

- Check content type — Structured content must follow the MCP content format. Plain text responses render as text only.

- Check ChatGPT version — Make sure you are using the latest version of ChatGPT.

- Fallback behavior — If a widget type is not supported, ChatGPT falls back to rendering the raw text content.

Server works locally but not from ChatGPT

- HTTPS required — ChatGPT only connects to HTTPS URLs. Self-signed certificates will not work.

- Firewall/network — Make sure your server is publicly accessible. Try accessing the URL from a different network.

- CORS — While MCP uses server-to-server communication, some deployments may need CORS headers for the initial connection.

FAQ

What is MCP server ChatGPT setup?

It is the process of connecting ChatGPT to a remote MCP server over streamable HTTP, letting ChatGPT discover the available tools, and then using those tools inside conversations.

Can ChatGPT connect to a local MCP server?

Not directly. ChatGPT supports remote MCP servers over HTTPS, not local stdio servers.

Advanced patterns

Tool chaining

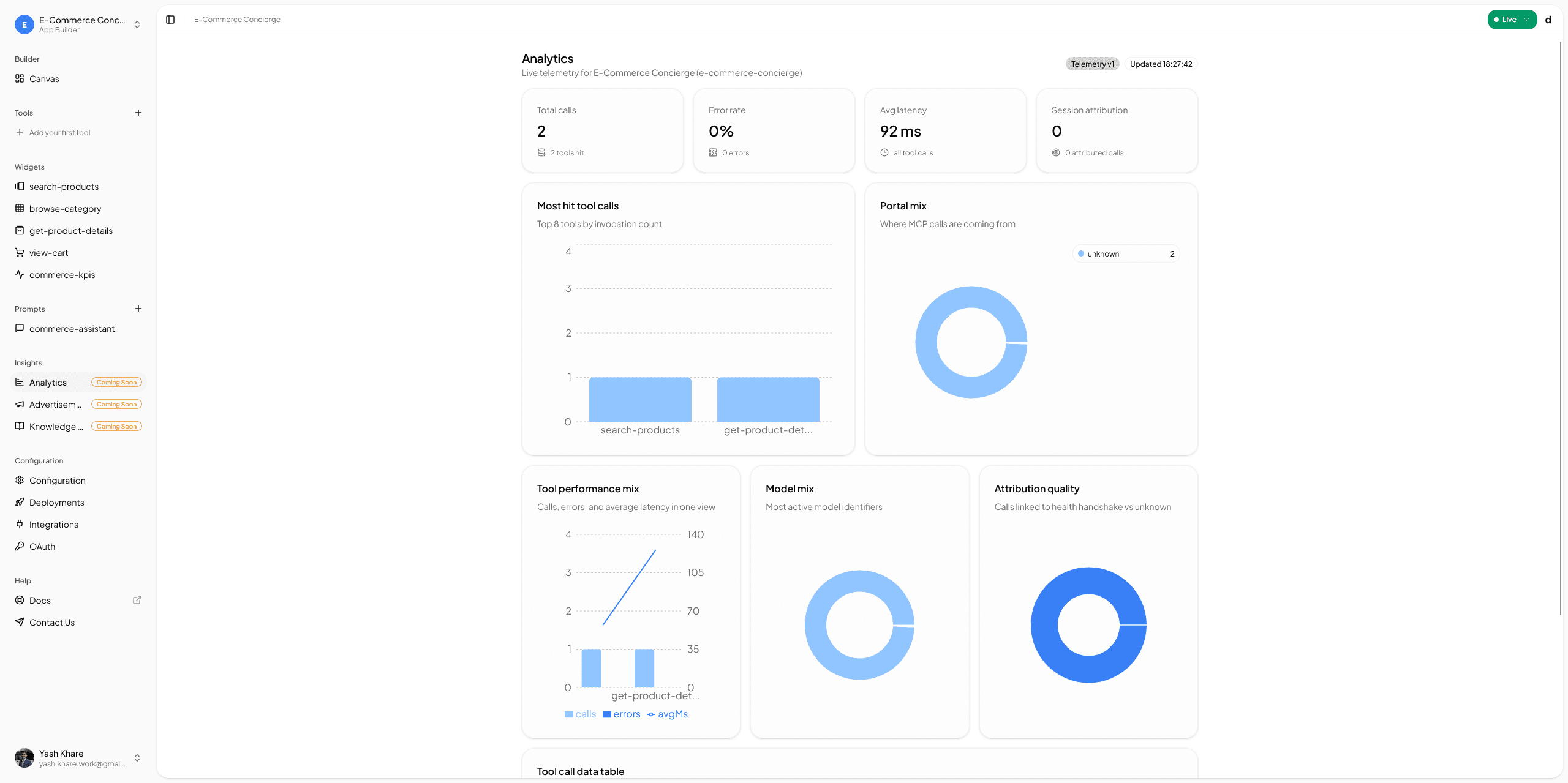

Build multiple tools that work together. A search_products tool returns a list, and a get_product_details tool returns a detail view. When the user clicks a product card, the action sends the product ID back to the AI, which automatically invokes the detail tool. This creates a multi-step conversational workflow.

Widget actions

Widgets can include action buttons that send structured messages back to the AI. A product card with "Add to Cart" sends { action: "add_to_cart", product_id: "123" } — and the AI routes that to your add_to_cart tool. This is how you build interactive experiences.

Multi-tool apps

A single MCP server can expose many tools. A CRM app might have search_contacts, get_deal_pipeline, create_task, and log_activity — all sharing the same API connection and branding. This creates a richer experience than a single tool.

For more on designing interactive MCP tools with widgets, see Building MCP Tools with Rich UIs. For inspiration on what to build, check out Best MCP Tools for ChatGPT.

What to do next

You have a working MCP tool in ChatGPT. Here is where to go from here:

- Build something useful — The weather example is a starting point. Connect your real APIs — your CRM, your database, your product catalog.

- Add more tools — A single MCP server can expose multiple tools. Start building a suite.

- Try other clients — Your MCP server works with any MCP client. Try it in MCP Setup for Claude Desktop or MCP Setup for Cursor too.

- Read the docs — Check the quickstart guide for a deeper dive into drio's capabilities.

The best ChatGPT tools are not generic utilities — they are specific, useful tools that solve real problems for your users. Build something only you can build.