What Are Claude Plugins? How Claude Connects to External Tools

Claude doesn't have plugins the way ChatGPT does. Instead, it uses MCP — the open protocol Anthropic created. Here's how Claude's tool ecosystem actually works.

If you searched for "Claude plugins," here is the short answer: Claude does not have plugins. Not in the way ChatGPT had them, anyway. There is no plugin store, no plugin manifest format, no plugin review process. Instead, Claude connects to external tools through MCP — the Model Context Protocol — which Anthropic created and released as an open standard in November 2024.

This is not just a naming difference. It is a fundamentally different architecture. ChatGPT went through a plugin beta that never really worked, then Custom GPTs, then finally adopted MCP as its app standard. Anthropic skipped the proprietary steps entirely and went straight to the open protocol. The result is that Claude's tool ecosystem is more flexible and more interoperable, but also less centralized and less curated than what ChatGPT offers.

Let me walk through how it actually works.

Why Claude never had plugins

To understand Claude's approach, it helps to look at what happened with ChatGPT plugins and why Anthropic chose a different path.

OpenAI announced a ChatGPT plugin beta in March 2023 with a proprietary format — developers built API wrappers, registered them with OpenAI, and users were supposed to install them from a directory. It never really worked. Access was extremely limited, the tools were unreliable, and almost nobody used them. By April 2024, OpenAI quietly shut the beta down.

Anthropic was watching this play out. When it came time to build tool connectivity for Claude, they made a different bet. Instead of creating a proprietary plugin system that locked developers into the Claude ecosystem, they designed an open protocol that any AI client could adopt. That protocol became MCP.

The logic was practical. If you are not the market leader in user base (ChatGPT had a massive head start), you benefit more from open standards than walled gardens. An open protocol attracts developers because their work is portable. And if the protocol becomes the standard, the platform that created it has a structural advantage in understanding and implementing it.

That is exactly what happened. MCP launched in November 2024, and within months it was adopted by OpenAI, Google, Microsoft, JetBrains, Cursor, and dozens of others. For the full story on the protocol, see What Is MCP?.

Claude's tool stack

Claude has several layers of tool connectivity. They are complementary, not competing.

graph TB L4["Claude Code Skills and Plugins Markdown instructions + MCP servers for the CLI"] L3["Integrations (Claude.ai) 75+ remote MCP servers — GitHub, Slack, Drive, etc."] L2["MCP (Model Context Protocol) Open standard — works across all AI clients"] L1["Tool Use API Proprietary — Claude API function calling"] L4 --> L3 --> L2 --> L1 style L4 fill:#faf5ff,stroke:#7c3aed,stroke-width:2px,color:#2e1065 style L3 fill:#f0fdf4,stroke:#16a34a,stroke-width:2px,color:#052e16 style L2 fill:#e0e7ff,stroke:#4f46e5,stroke-width:2px,color:#1e1b4b style L1 fill:#fff7ed,stroke:#ea580c,stroke-width:2px,color:#431407

Tool Use API

This is Claude's built-in, proprietary mechanism for calling functions. If you are building an application on top of Claude via the Anthropic API, you define tools in your API request and Claude can invoke them during a conversation.

Tool Use is part of the Anthropic API and is specific to Claude. It is not MCP — it is a platform feature for developers building on the Claude API directly. Think of it as Claude's equivalent of OpenAI's function calling.

MCP (Model Context Protocol)

MCP is the open standard layer. While Tool Use is proprietary to Claude's API, MCP is a universal protocol that works across AI clients. Anthropic designed it, open-sourced it, and actively maintains the specification and SDKs.

The key difference: a tool built for the Tool Use API only works with Claude's API. A tool built as an MCP server works with Claude, ChatGPT, Cursor, VS Code Copilot, and 70+ other clients.

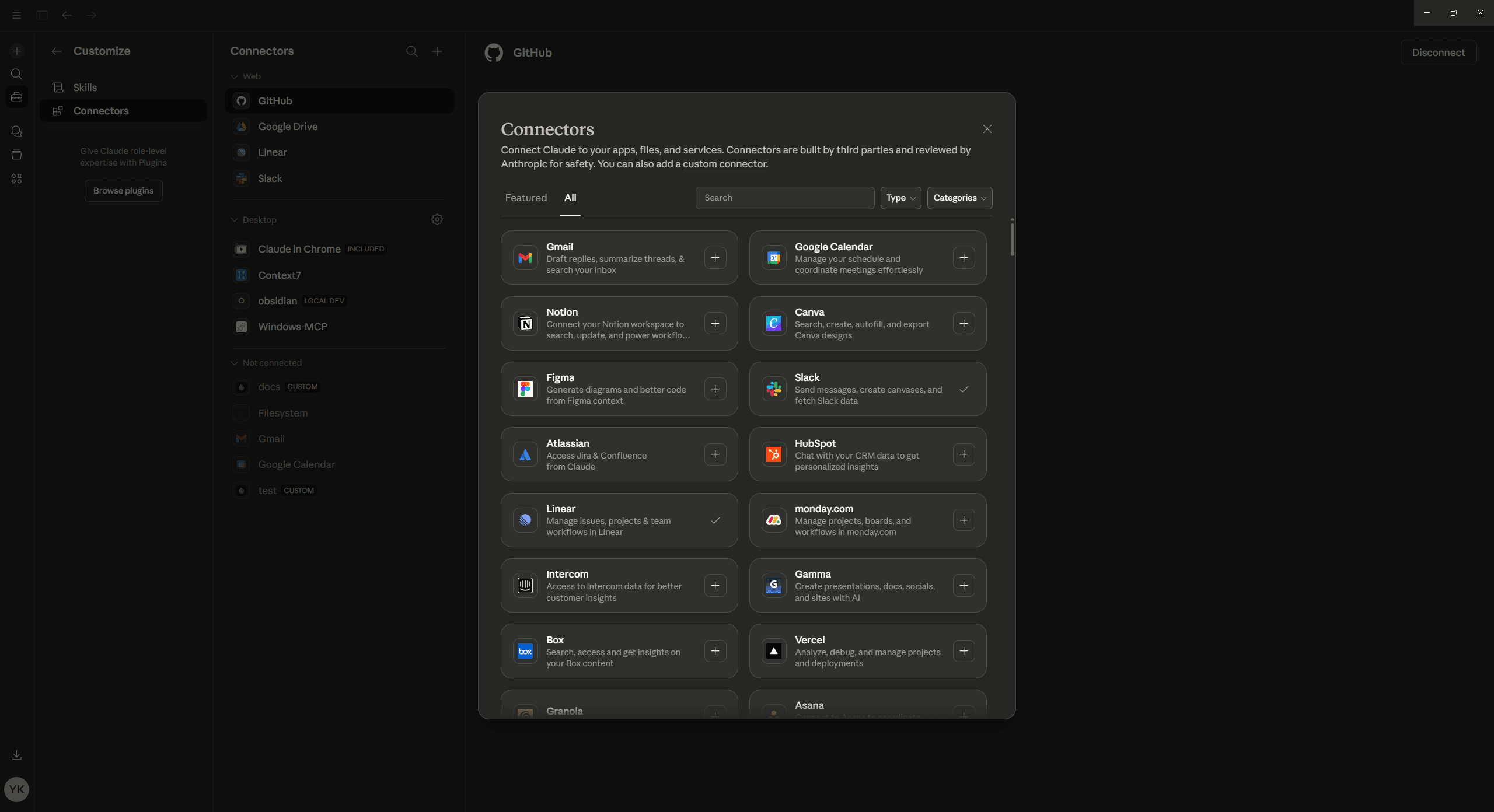

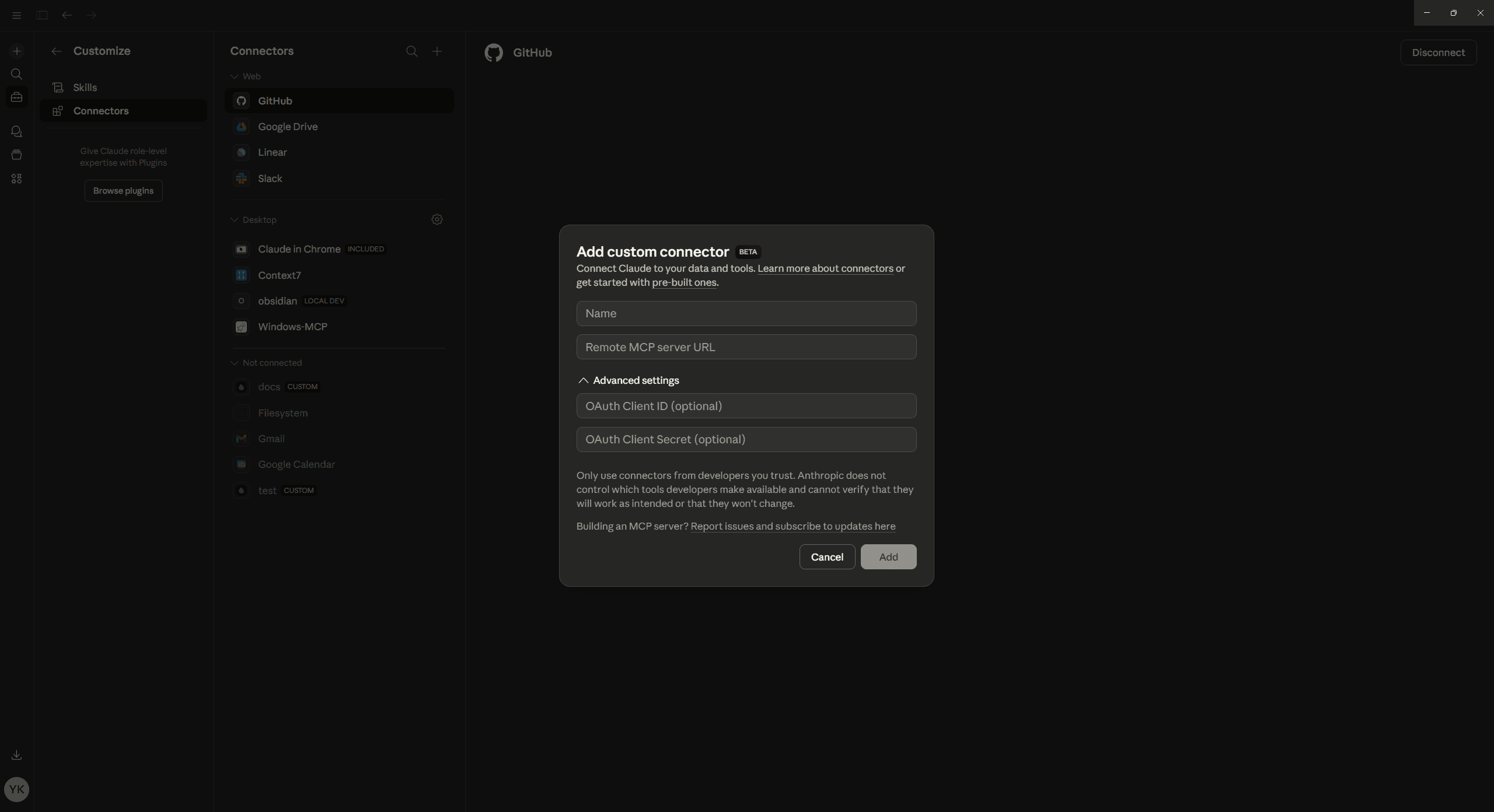

Integrations (Claude.ai)

Claude.ai — the web and mobile interface — has its own integrations directory. As of early 2026, there are over 75 integrations available, connecting Claude to services like Google Drive, GitHub, Slack, Notion, Jira, Linear, Salesforce, and more.

These integrations use remote MCP servers over Streamable HTTP. From a protocol standpoint, they are MCP connections. From a user standpoint, they look like built-in features — you enable them in settings and they just work.

Skills and Plugins (Claude Code)

Claude Code — Anthropic's CLI tool for coding — added its own plugin system in January 2026. These are not the same as ChatGPT-style plugins. Claude Code plugins are MCP servers that extend the CLI's capabilities. You install them with a command, and they add tools that Claude Code can use during coding sessions.

Claude Code also has "Skills," which are markdown instruction files that get loaded into context for specific tasks. Skills are simpler than plugins — they are just text files with guidelines, not executable tools.

How MCP works in Claude Desktop

Claude Desktop was the first AI client to support MCP, and it still has the most complete implementation. It supports all three MCP primitives (tools, resources, and prompts) and both transport mechanisms (stdio for local servers, Streamable HTTP for remote servers).

The config file

MCP servers in Claude Desktop are configured via a JSON file. The location depends on your OS:

- macOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json - Linux:

~/.config/Claude/claude_desktop_config.json

Here is what a typical configuration looks like:

{

"mcpServers": {

"filesystem": {

"command": "npx",

"args": [

"-y",

"@modelcontextprotocol/server-filesystem",

"/Users/you/projects"

]

},

"github": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": {

"GITHUB_TOKEN": "ghp_your_token_here"

}

},

"my-remote-tool": {

"url": "https://app.getdrio.com/my-app/mcp"

}

}

}Each key under mcpServers is a server name. Local servers use command and args (Claude Desktop launches them as subprocesses via stdio). Remote servers use url (Claude Desktop connects over Streamable HTTP).

You can mix local and remote servers freely. After editing the config, restart Claude Desktop and the servers will be available in your conversations. For the complete setup walkthrough, see How to Set Up MCP Servers in Claude Desktop.

What happens at runtime

When Claude Desktop starts (or restarts), it reads the config and establishes connections to each MCP server. For local servers, it spawns the process. For remote servers, it sends an HTTP initialization request.

Each server responds with its capabilities — what tools, resources, and prompts it exposes. Claude Desktop registers all of these and makes them available in conversations. When a user asks something that matches a tool's purpose, Claude invokes the tool, receives the response, and renders the result.

The rendering supports structured content. Cards, tables, interactive elements — Claude Desktop handles MCP widget primitives. This is a significant upgrade from what text-only systems offered.

How MCP works in Claude Code

Claude Code — the CLI for coding with Claude — also supports MCP, with a different configuration model.

Adding servers

You add MCP servers to Claude Code with the claude mcp add command:

# Add a stdio server

claude mcp add my-server npx @modelcontextprotocol/server-filesystem /path/to/dir

# Add a remote server

claude mcp add my-remote-tool --url https://app.getdrio.com/my-app/mcpThree scopes

Claude Code supports three configuration scopes:

- Local — Stored in

.claude/in the current directory. Only available in that project. - Project — Stored in

.mcp.jsonat the project root. Shared across the team via version control. - User — Stored in the user's global config. Available in all projects.

This scoping system is useful for teams. You can have project-specific servers (a database tool for that project's database) alongside global servers (a general-purpose GitHub tool).

The decentralized model: no app store

Here is the biggest difference between Claude and ChatGPT when it comes to tools. ChatGPT has a centralized app directory at chatgpt.com/apps. OpenAI curates submissions, features partners, and controls the distribution pipeline. It is an app store model.

Claude does not have that. There is no "Claude App Store." MCP servers are decentralized. Anyone can build an MCP server, deploy it anywhere, and share the URL. Users configure their own connections — either through Claude Desktop's JSON config, Claude Code's CLI commands, or Claude.ai's integrations page.

The trade-offs

Centralized (ChatGPT's model):

- Better discoverability for users

- Quality control through curation

- Larger distribution reach (900M+ weekly active users)

- Single point of approval/rejection

Decentralized (Claude's model):

- No gatekeeping — anyone can deploy a server

- No review delays

- Full control over distribution

- More developer freedom, less discovery

graph TB

subgraph centralized["ChatGPT: Centralized"]

direction TB

Dev1["Developer"] --> Review["OpenAI Review"] --> Store["App Directory"] --> User1["900M+ Users"]

end

subgraph decentralized["Claude: Decentralized"]

direction TB

Dev2["Developer"] --> Deploy["Deploy anywhere"] --> URL["Share URL"]

URL --> Desktop["Claude Desktop"]

URL --> Code["Claude Code"]

URL --> Web["Claude.ai"]

end

style centralized fill:#eff6ff,stroke:#2563eb

style decentralized fill:#faf5ff,stroke:#7c3aedNeither model is strictly better. ChatGPT's approach is better for reaching consumers who do not know what tools exist. Claude's approach is better for developers and technical users who know what they want and just need to connect it.

The 75+ integrations on Claude.ai are a partial bridge — they give a curated, directory-like experience for common services while keeping the protocol open for custom servers.

User base and positioning

The numbers paint a clear picture of how the two platforms differ.

ChatGPT has over 900 million weekly active users. It is the mainstream AI assistant — used by everyone from students to enterprise teams to casual users.

Claude's user base is smaller — roughly 19 million monthly active users as of late 2025. But Claude over-indexes on developers and technical users. In coding-specific workflows, Claude's market share is disproportionately high. Cursor, one of the most popular AI code editors, uses Claude as its default model.

This positioning difference affects tool strategy. If you are building a tool for a broad consumer audience, ChatGPT's app directory gives you more reach. If you are building developer tools, infrastructure integrations, or technical workflows, Claude's ecosystem might be more relevant.

The good news is that MCP makes this a non-decision. Build one MCP server and it works on both platforms. You do not have to choose. For a detailed comparison of how different AI clients implement MCP, see MCP Client Comparison.

Building tools for Claude

Whether you want to reach Claude Desktop users, Claude.ai users, or Claude Code users, you are building the same thing: an MCP server.

Code-first

Use the TypeScript SDK or Python SDK to build a server from scratch. Define your tools with descriptions and input schemas, implement the handlers, set up either stdio or Streamable HTTP transport, and deploy.

For local development, stdio is convenient — Claude Desktop launches the server as a subprocess and communication happens over stdin/stdout. For production deployment, Streamable HTTP makes the server accessible from Claude.ai and other remote clients.

Visual builder

drio lets you build MCP servers visually — define tools, connect APIs, design widget responses, and deploy to a production URL. The deployed server works with Claude Desktop, Claude.ai, Claude Code, ChatGPT, and every other MCP client. For a walkthrough of the visual approach, see Build AI Apps Without Code.

Connecting to ChatGPT too

Since MCP apps work across clients, the same server you connect to Claude also works with ChatGPT. Deploy once, add the URL to Claude Desktop's config and to ChatGPT's app settings. Same tool, same code, two platforms. For the ChatGPT-specific setup, see How to Add MCP Tools to ChatGPT.

The bigger picture

Anthropic's decision to create MCP instead of a proprietary plugin system turned out to be the defining move of the AI tool ecosystem. By going open first, they set the standard that everyone else adopted — including their biggest competitor.

The practical implication for builders is simple. Do not think about "Claude plugins" or "ChatGPT apps" as separate categories. Think about MCP servers. Build one, deploy it, and connect it to every AI client your users care about. The protocol handles the interoperability. Your job is to build something useful.

Takeaways

- Claude does not have plugins in the ChatGPT sense. It uses MCP — the open protocol Anthropic created.

- Claude's tool stack has four layers: Tool Use API (proprietary), MCP (open standard), Integrations (Claude.ai), and Plugins/Skills (Claude Code).

- Claude Desktop supports the full MCP spec — tools, resources, prompts, stdio and HTTP transports — configured via a JSON file.

- No centralized app store. MCP servers are decentralized. Anyone can deploy and share a server URL.

- ~19M MAU vs. ChatGPT's 900M — but Claude leads in developer and coding use cases.

- MCP makes platform choice irrelevant. Build one server, connect it everywhere.

Anthropic skipped the proprietary plugin era entirely and went straight to the open standard. That bet is paying off — MCP is now the protocol that even OpenAI uses.